The Artisans of AI

- Words Celine Nguyen

- Photos Julien Sage

Meet the designers merging code with creativity to build a more human AI.

“I’ve always been into vintage things,” the creative director Everett Katigbak explains. “Anything that’s a form of old technology, in the broad sense. Letterpress and screenprinting: Those, at one point, were very modern production methods that now have a more human, tactile quality. I’m very interested in how these things emerge.”

An exhibition Katigbak designed while working at the J. Paul Getty Museum in Los Angeles early on in his career featured the enormous bellows camera used by photographer Carleton Watkins during the mid-19th century. He’s passionate about analog forms of creativity and shoots at least one roll of 35mm every week—an intriguing mindset, considering Katigbak’s day job: the first designer and creative director of Anthropic, the high-profile San Francisco artificial intelligence start-up co-founded by siblings Daniela and Dario Amodei.

Anthropic is best known for Claude, an AI assistant first released in spring 2023 as a thoughtful companion that can help users navigate the burgeoning intersection of human creativity and technology. The overall goal for the company is that Claude is less of a tool and more a collaborative canvas: It currently features three language models—Haiku, Sonnet and Opus—that are designed to help with research, writing and creative tasks, and Anthropic’s researchers have taken a conscientious approach to training the large language model behind Claude so that it’s as “helpful, honest, and harmless” as possible.

While Claude wasn’t the first chatbot on the market, it has steadily gained an insider following among technologists, especially those torn between techno-optimism and fear of AI’s potential for harm. Anthropic hopes Claude will inhabit a more nuanced terrain between these two binaries, offering a responsive environment that acknowledges the intricacies of human thought and invites creativity. Instead of treating AI as an impenetrable “black box,” for example, Anthropic has tried to develop a more rounded character for Claude—in the same way a mentor might guide their protégé—so that the chatbot comes across as collaborative but not sycophantic; respectful but not impersonal or overshadowing the unique voice of the user. “We want Claude to be empathetic and genuinely helpful, without being overly prescriptive,” Katigbak explains. A chatbot, he thinks, should “be a better listener than talker.”

Listening may be exactly what’s needed right now, given the anxieties people have about artificial intelligence. An unusually capable chatbot can trigger fears of automation, and the social and economic disruption that might follow. But encountering an incompetent AI—one that’s far from capable of taking your job—isn’t much better. Many creatives have expressed concerns that generative AI will devalue their work and contribute to cultural blandness or even stagnation.

It’s a problem that Kim Bost, a design leader at Anthropic, thinks about a lot. “We’re in a really tender moment right now [with AI],” she says. Like Katigbak, Bost’s career began in a traditional way: an internship at the renowned design firm Pentagram, then a stint at The New York Times as an art director. But she found herself drawn to software design, and it’s clear that—despite her scrupulous awareness of people’s anxieties—she believes that technology can be a positive force. “People are trying to understand these tools,” she says, explaining that creatives are increasingly curious about “where they’re valuable in their practice.” They’re also, she adds, “trying to find tools they can trust.”

“How can software, especially software meant to facilitate creative expression, feel personable and handmade?”

From left to right: Kyle Turman, Kim Bost and Everett Katigbak, part of the design team at Anthropic.

While many artists and creatives have so far steered clear of AI—out of unfamiliarity, cautiousness or hostility—others have embraced it as another artistic tool. The Canadian writer Sheila Heti (interviewed on page 164) trained a chatbot to be the narrator of her short story “According to Alice,” published in The New Yorker. The experimental musical artists and composers Holly Herndon and Mat Dryhurst trained a machine learning model on Herndon’s voice that anyone can use online. (The model, Holly+, is a “vocal deepfake” that reflects Herndon’s interest in more iterative, collaborative music—and an ingenious way to subvert the fear of artistic theft and copying.) And the multidisciplinary designer Ezra Miller, who’s worked with fashion brands such as Balenciaga and musicians such as Caroline Polachek, uses AI to deform photographs of architecture, landscapes and interiors. In all these works, the artist’s hand is still present—it’s just augmented by AI.

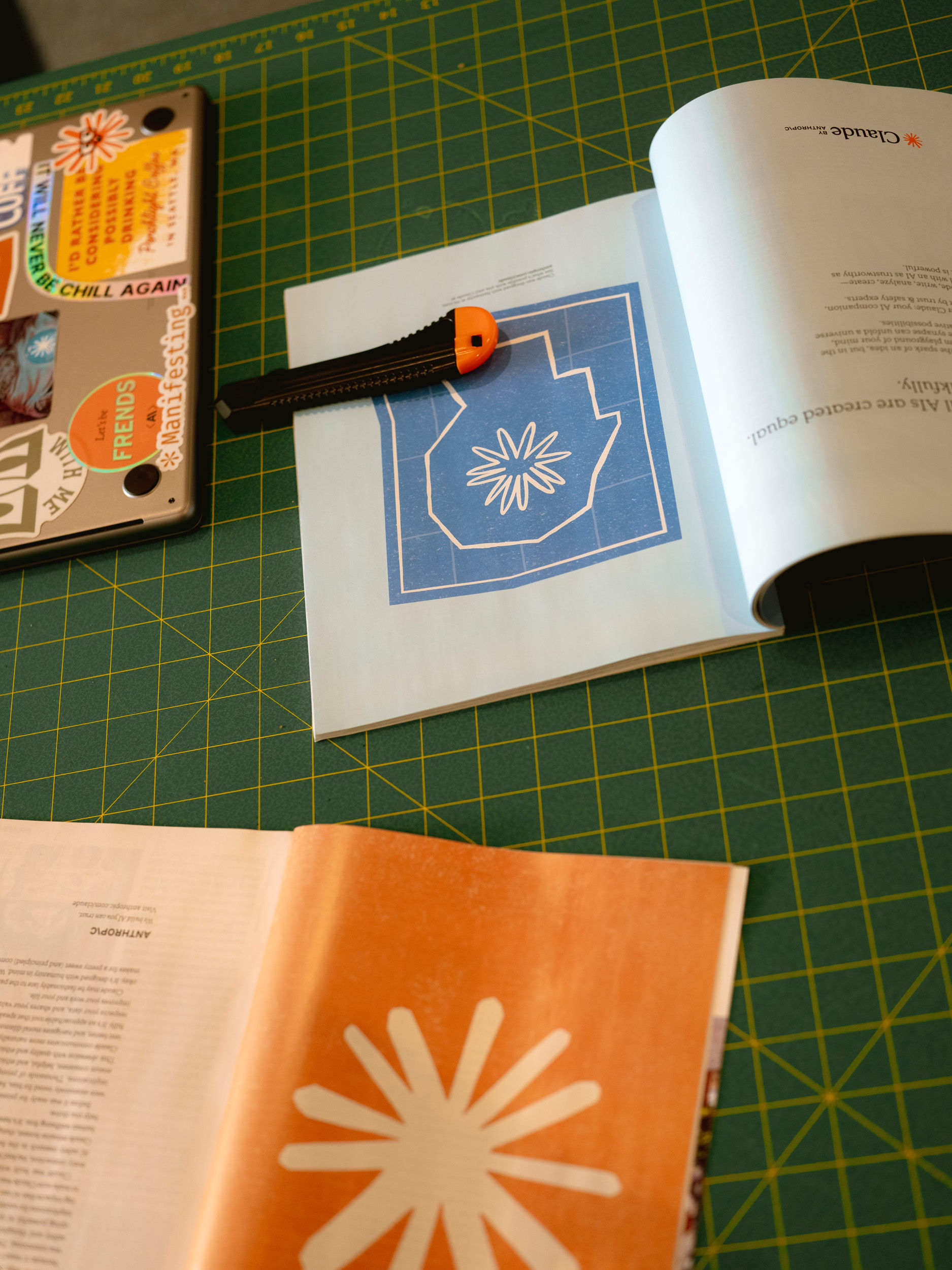

“We talk a lot about the idea of the mark of the maker,” says Kyle Turman, who joined the design staff at Anthropic a few months after Katigbak. The two collaborated on Claude’s early interface and brand identity (soft beige tones, hand-drawn illustrations, serif typeface) with an ambitious goal in mind: How can software, especially software meant to facilitate creative expression, feel personable and handmade? Claude’s visual identity, Katigbak says, “leans into the imperfections of human, handmade things… There’s a warmth and tactility that we try to bring into the interface.” One of the most distinctive features of the interface is the “spark,” a hand-illustrated asterisk that stays still when Claude is listening, and flutters gently while it responds, like a 21st-century Clippy—albeit more abstract than anthropomorphic. The goal is to make people feel as if “the software acknowledges you as a person and reacts to you,” says Turman. That feeling, they hope, will “give a bit of trust back to the user.”

A sense of trust and safety can open the door to what Bost describes as “expansive moments.” She hopes that artists, writers, designers and other makers can use Claude throughout their creative processes, and in ways that complement their natural interests. Turman, whose personal creative pursuits include music-making, drawing and occasionally painting, describes how they use Claude to help them research and assemble reference points. “What can be really fun and interesting, especially as an art history nerd,” says Turman, “is to ask: There’s a vibe I’m trying to convey and experience. Here’s what I’m thinking about. Which other artists have thought about this before?”

It often seems as if Claude’s designers are trying to recreate the best parts of their design education and figure out how to deepen those experiences with AI. “I grew up in this design era where we lived and breathed and died critiques,” says Katigbak. As a young art school student in Pasadena, that meant pinning up work on the studio walls for his peers to respond to. Chatbots like Claude, he suggests, can act as thoughtful interlocutors and critique partners in a similar, meaningful way. “When I’m refining an idea,” he says, “I never outsource the idea to AI.” But Claude does help him “vocalize an intention or thought…and bounce things back at me without judgment.” In the early years of his career, he says, “There wasn’t a way for designers to [prototype] at a rapid cadence and sharpen their skills in such a compressed amount of time.” He envies the resources available to younger designers today: “They have access to stuff that we never had in our formative stages. In many ways, they’re way further ahead.”

Bost’s face lights up when she starts thinking about AI’s potential in art and design education. She’s enthusiastic about the work of educators like Kelin Carolyn Zhang, who teaches an AI design studio at the Rhode Island School of Design. Zhang’s own practice combines a deep affection for the arts with an interest in technology: Her cheerfully retro Poetry Camera project, designed in collaboration with Ryan Mather, is an instant camera that writes AI-generated poems about what it sees, instead of producing photos. Zhang’s classes help students tackle sophisticated coding projects—with Claude’s help. For the students, being able to make something real—and not be hindered by their nascent technical skills—is transformative. Turman concurs: “It’s helped people take an idea and make it into a reality… I’m seeing more ideas come to fruition a lot faster.”

Kim Bost, design leader at Anthropic, at the company’s headquarters in San Francisco.

“It’s going to be more important that your own perspective is added.”

Speed, however, isn’t the only thing that Anthropic’s designers are interested in. “Every paradigm shift in technology,” Katigbak muses, “has promised more efficiency and more productivity.” But the more interesting project, he says, is whether technology can help people with “that last little bit” of the human experience: self-actualization.

“On one hand,” he says, “I’m this anachronist: I drive ’60s cars, I type with typewriters. But I’m also a futurist, and I think about how technology continues to evolve while human creativity stays the same.” For Katigbak and his colleagues, there’s no fundamental conflict between revering the past and pushing technology forward. If anything, AI might heighten our appreciation for certain intangible qualities: discernment, intuition, subjectivity, intention. When it comes to physical crafts, he suggests, the ease of digital fabrication may “add more value and meaning” to, say, a handmade ceramic.

Anthropic’s hope, of course, is that creatives will find value and meaning in working with AI, too. “I believe that people, particularly designers and artists, choose products because they see them as a reflection or an extension of themselves,” Bost says. Artists and writers develop attachments to specific pens, pencils and brushes. Creatives working today might develop similar attachments to AI assistants like Claude.

“If we think about the potential harms,” Turman adds, it’s possible that “someone could automate a bunch of content and art,” therefore devaluing creative and artistic labor. But the more likely future, they think, is that: “It’s going to be more important that your own perspective is added.” Making creative technologies such as Claude more accessible to creative people, according to Turman, will ultimately emphasize “how your own taste and personality come through in the things you make.” The goal isn’t to automate away someone’s point of view; it’s to help them express it in new ways.

This article was produced in partnership with Anthropic.